20 Years of CUDA: Honoring the Architects of the Accelerated Age

What began in 2006 as a bold parallel computing bet has evolved into the foundational heartbeat of modern science and AI.

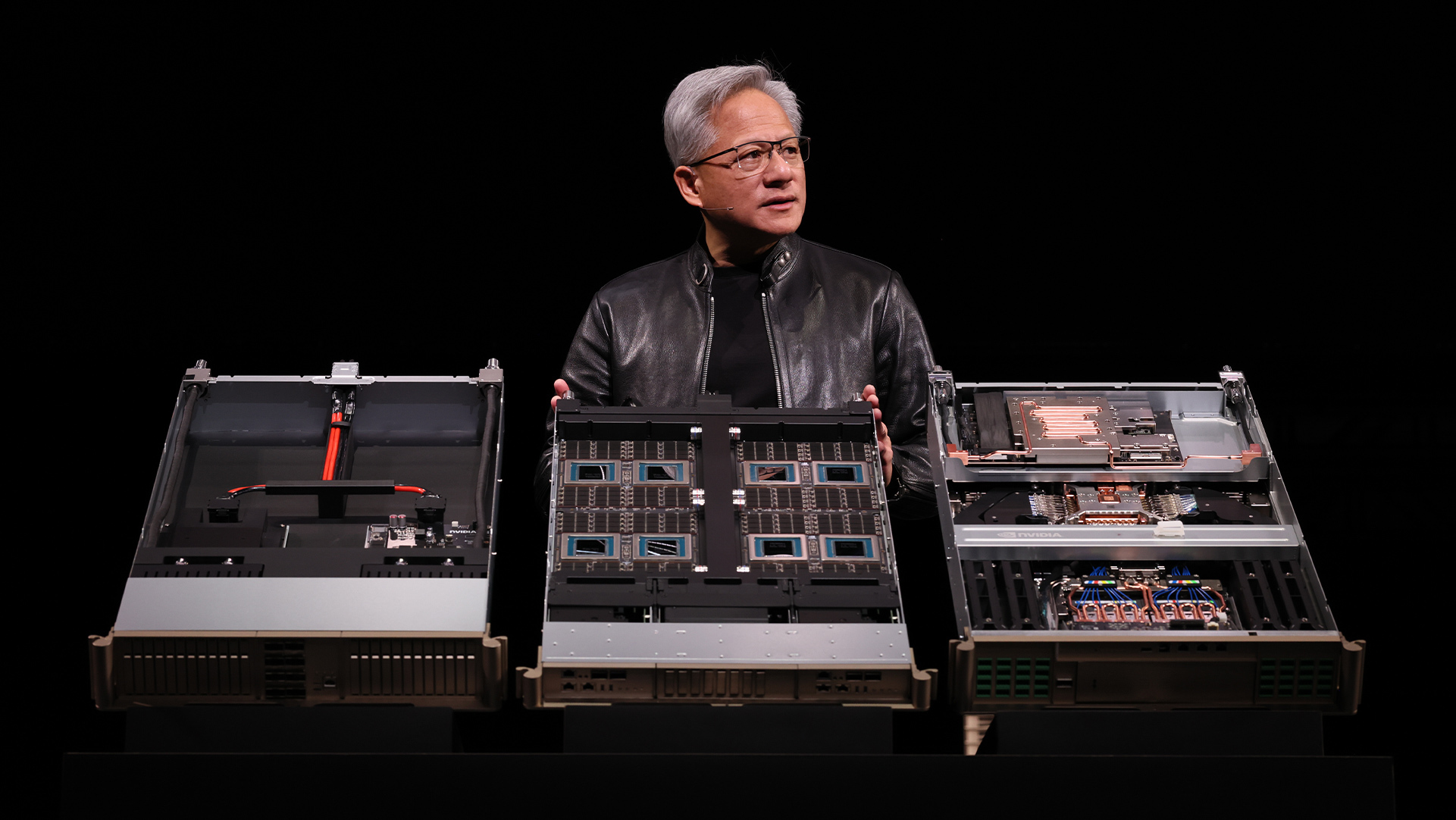

At GTC, NVIDIA is marking two decades of CUDA — representing the efforts of over 6 million developers innovating across every layer of the computing stack. Today, it serves as a generational bridge between the pioneers who wrote the first kernels and the next wave of builders deploying trillion-parameter AI models.

Led by NVIDIA CUDA Architect Stephen Jones, a panel at GTC Wednesday featured a group of researchers and engineers from Jump Trading, Meta Superintelligence Labs and NVIDIA who highlighted the decades of innovation behind CUDA, how it helps developers solve some of the world’s most complex problems — and how systems like the NVIDIA DGX Spark desktop AI supercomputer will enable the next generation of CUDA developers.

The group shared memories of the early days of CUDA — when “nobody wanted GPUs,” said Paulius Micikevicius, a software engineer at Meta Superintelligence Labs. “We had to go and beg them to consider using GPUs.”

During that time, Wen-Mei Hwu, senior distinguished research scientist and senior research director at NVIDIA, then a professor at the University of Illinois Urbana-Champaign, decided to build a 200-GPU system in two months with a group of grad students.

“A couple of weeks later, 200 GPU boards arrived, and power supply and everything — but there’s no chassis. So we ended up building wood frames for each of these boards … and we ran the Green500 [benchmark] and we got No. 3,” Hwu said. “That was the moment I realized that the energy efficiency of GPUs has incredible potential.”

As the scale of accelerated computing has shifted to rack-scale systems and AI factories, the panelists see desktop AI systems like DGX Spark as a new way forward for prototyping and early development.

“As long as you have that capability to do that initial exploration and something that fits on your desk or your lap, that’s the critical thing,” said Kate Clark, distinguished devtech engineer at NVIDIA. “I don’t see that going anywhere anytime soon. We’ll always have CUDA everywhere.”

Monday, March 16, 1:30 p.m. PT 🔗

NVIDIA cuDF and cuVS Adopted by World’s Leading Data Platforms, Fueling Modern Enterprise Data Processing

Enterprises are generating hundreds of zettabytes each year, and organizations are racing to turn that information into insights. NVIDIA cuDF and cuVS — accelerated data libraries built on NVIDIA CUDA‑X — are being adopted by data platforms across industries to deliver up to 5x faster performance while reducing costs for structured and unstructured data processing.

Integrated with the world’s most widely used open source data engines — downloaded over 200 million times monthly by developers — these libraries are harnessed across enterprise data platforms, databases and data lakes. This helps organizations accelerate innovation, develop more accurate models and process more data while managing costs.

For structured data, NVIDIA cuDF accelerates open source data processing engines such as Apache Spark, Presto, DuckDB, Polars and Velox, delivering up to 5x faster processing compared with CPU-only deployments.

For unstructured data — which represents 80% of today’s enterprise data and is growing rapidly — NVIDIA cuVS accelerates leading engines including FAISS, Amazon OpenSearch Service and Milvus. This helps agents and applications extract context, facts and recommendations from vast stores of text, images and video in a fraction of the time.

Powering Enterprise Data Processing Platforms

Google Cloud integrates NVIDIA cuDF to accelerate Apache Spark within Dataproc and cuDF can be easily used within Google Kubernetes Engine (GKE) to reduce processing times for massive ETL jobs from hours to seconds while lowering compute costs.

At Snap, which serves more than 946 million active users, NVIDIA cuDF on GKE cut daily data processing costs by 76%. This enables 10 petabytes of data to be analyzed within a three-hour window — saving millions of dollars.

“Our collaboration with NVIDIA and Google Cloud helps us innovate faster for more than a billion Snapchatters worldwide,” said Saral Jain, chief information officer of Snap. “By lowering data processing costs and scaling experiments across petabytes of data, we’re delivering AI-powered experiences more quickly and efficiently.”

IBM watsonx.data is a hybrid, open data platform that includes open source analytics engines such as Apache Spark and Presto engines for structured data, and a vector engine based on OpenSearch. In early experiments with Nestlé’s Order-to-Cash mart, watsonx.data with NVIDIA cuDF accelerated workloads ran five times faster, with 83% lower cost savings.

“For a company that serves billions, data underpins decision making across our global operations,” said Chris Wright, chief information and digital officer of Nestlé. “Working with IBM and NVIDIA, a targeted proof of concept has demonstrated the ability to refresh global operations data in a few minutes and at reduced cost. Our focus now is on turning this capability into tangible business impact — further improving decision speed in areas such as manufacturing and warehousing, and scaling these capabilities across our enterprise.”

The Dell AI Data Platform with NVIDIA includes accelerated data engines that enable enterprises to quickly and securely activate their Dell AI Factory with AI-ready data. It features an Apache Spark-based processing engine accelerated with NVIDIA cuDF, delivering up to 3x faster performance, and an enterprise-grade vector database accelerated with NVIDIA cuVS, delivering up to 12x higher throughput for vector indexing compared with CPUs.

“Purpose-built for agentic AI, the Dell AI Data Platform with NVIDIA uses accelerated data processing engines to make multimodal data AI-ready in hours instead of days,” said Michael Dell, chairman and CEO of Dell Technologies.

Oracle announced that Oracle Private AI Services Container can greatly accelerate vector index creation in Oracle AI Database using NVIDIA cuVS, helping organizations speed up AI-enabled decisions with the latest information.

“Enterprise AI is moving from experimentation to production,” said Clay Magouyrk, CEO of Oracle. “Oracle AI Database with NVIDIA technology delivers AI-ready data within minutes, enabling applications that were previously impossible.”

NVIDIA cuDF and cuVS are supported by leading enterprise data platforms including EDB Postgres AI, NetApp, Snowflake, Starburst and VAST Data — setting the foundation for the AI‑powered future of data processing.

Friday, March 20, 2:00 p.m. PT 🔗

Quantum Computing Reaches an Inflection Point With NVIDIA NVQLink

At GTC, NVIDIA made NVQLink publicly available through a new application programming interface (API) called cudaq-realtime — and shared demonstrations advancing the state-of-the-art in quantum error correction.

First announced at GTC Washington, D.C. in October, NVQLink’s low-latency, high-throughput connectivity between quantum processors and GPU supercomputing is now accessible to the quantum computing community, with product offerings from Dell signaling rapid ecosystem adoption.

The cudaq-realtime API, available in the NVIDIA CUDA-Q software platform, provides open source, turnkey integration of GPU-accelerated supercomputing with quantum processors. With cudaq-realtime, early adopters in the quantum community have demonstrated tight, real-time control of quantum hardware and deployed hybrid quantum-classical applications.

Leading U.S. national labs, including Pacific Northwest National Laboratory and Lawrence Berkeley National Laboratory, along with QPU builders Quantinuum and Infleqtion and quantum software provider Q-CTRL, have adopted NVQLink, in some cases showing order-of-magnitude reductions in decoding and calibration latencies compared with previous work.

NVQLink has also begun deployment in commercial systems, with QPU builder Anyon Computing and quantum systems provider SDT unveiling an NVQLink quantum-GPU system at a Korean commercial data center, heralding the move to building accelerated quantum-supercomputing systems in production environments.

Computer-Aided-Engineering 🔗

Monday, March 16, 1:30 p.m. PT 🔗

NVIDIA Launches cuEST for Accelerated Quantum Chemistry in Semiconductor Design

NVIDIA this week launched NVIDIA cuEST, a new NVIDIA CUDA-X library that shifts electronic-structure calculations onto GPUs. Applied Materials, Samsung, Synopsys and TSMC are among the initial adopters.

A leading-edge chip now contains over 50 billion transistors. Engineering them requires answering fundamental physics questions at the atomic scale: how electrons bond, how they migrate and how they interact across films just a few atoms thick.

“As semiconductor scaling reaches the physical limits of materials, the industry requires a massive increase in computing performance to simulate the quantum mechanics of next-generation chip designs,” said Tim Costa, general manager for industrial and computational engineering at NVIDIA. “With NVIDIA cuEST, industry leaders can move past the quantum bottleneck and take high-fidelity chemical modeling directly into production to accelerate semiconductor innovation.”

Industry Impact

- Applied Materials: Applied Materials uses cuEST-accelerated density functional theory (DFT) to model challenging structures, predict material properties and study reaction pathways.

- Samsung: Samsung integrated cuEST into its internal pipeline, already accelerated on GPUs, to deliver yet another up to 5x end-to-end speedup for key quantum-chemistry workloads.

- Synopsys: Powered by cuEST and QuantumATK, Synopsys expanded its functionality to include Gaussian-basis DFT, accelerating simulations up to 30x for semiconductor workflows.

- TSMC: TSMC uses cuEST’s accelerated quantum chemistry to advance processes for next-generation silicon design.

From the Lab to the Fab

The most common method for atomistic modeling is density functional theory. DFT offers a strong balance between accuracy and scalability; however, its computational cost has limited its widespread use in industry, keeping most applications confined to research. With cuEST, NVIDIA makes high‑accuracy quantum‑chemistry feasible at an industrial scale and in real production workflows.

Historically, the industry has relied on CPU clusters to run these simulations, evaluating candidate materials, including gate dielectrics and interconnect metals, one batch at a time over hours or days.

cuEST provides optimized routines so GPUs can accelerate the core matrices of a Gaussian-basis DFT calculation, including overlap, kinetic energy, nuclear attraction, Coulomb and exchange-correlation. It also supports functional approximations ranging from standard generalized gradient approximation to hybrid functionals, allowing engineers to balance computational cost with accuracy.

NVIDIA’s goal for cuEST: moving high-fidelity material modeling from the lab to the fab.

Learn more about cuEST by joining the NVIDIA demo booth and Synopsys’ booth at GTC, and dive deeper in the GTC session, “Next-Generation Discovery: Agentic AI for Science, AI-Driven Simulation and GPU-Accelerated Chemistry.”